Background

In one of the earlier posts, we saw how to use Javascript (JS) closures to hide variables from the console with a counter-example. In this post, I will explain JS closures in more detail.

Closures in Javascript

Quoting closures from MDN web docs:

A closure is the combination of a function bundled together (enclosed) with references to its surrounding state (the lexical environment). In other words, a closure gives you access to an outer function's scope from an inner function. In JavaScript, closures are created every time a function is created, at function creation time.

It's important to note that it is not just a function but a combination of function + referenced to its lexical environment.

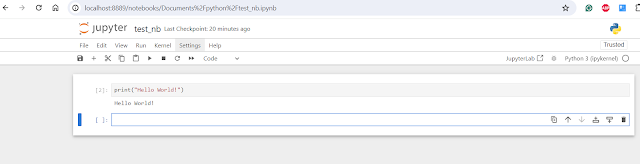

Let's take a simple example:

(() => {

var name = "Aniket";

const displayName = () => {

console.log(name);

}

displayName();

})()

The (()=>{})() is a self-executing function. Inside it, you can see an anonymous function defined using the arrow operator. Inside this function, we have defined a variable called name and again an anonymous function called displayName which prints the name variable in the console.

What is interesting to note here is that even though the variable name is local to the outermost anonymous function we can still access it inside the displayName method, this is possible due to closures. When we created an anonymous function & assigned it to the displayName const variable it actually created a closure that comprised of the function itself + the reference to the lexical environment which includes the name variable defined in the outer scope.

NOTE: Nested functions have access to variables declared in their outer scope.

Understanding scopes

In the above examples, the variable name was defined in a function and had functional scope. It was accessible in that function + the methods nested inside it. It will not be accessible in the global scope.

Before ES6 there were just two scopes, function scope and global scope. variables defined inside a function had function scope where whereas those defined outside had global scopes. This came with its own challenges as now if you define variables inside if else block braces then it creates a global scope because blocks with curly braces do not create scopes.

Eg. see below

Here check variable even though was defined inside the if block has become a global scoped variable (Unlike other languages like Java where it would have created a local scoped variable).

To handle this ES6 introduced two new declarations

- let - defines a variable local to the scope

- const - defines a constant variable again local to the scope.

See below for example:

NOTE: Closure gives the function access to its outer function but the outer function can also be a nested function inside another outer function. In such cases, the innermost function will have access to all the outer nested functions via the closure chain. See eg below

Interesting examples

Now let's see some interesting examples before we wind this up.

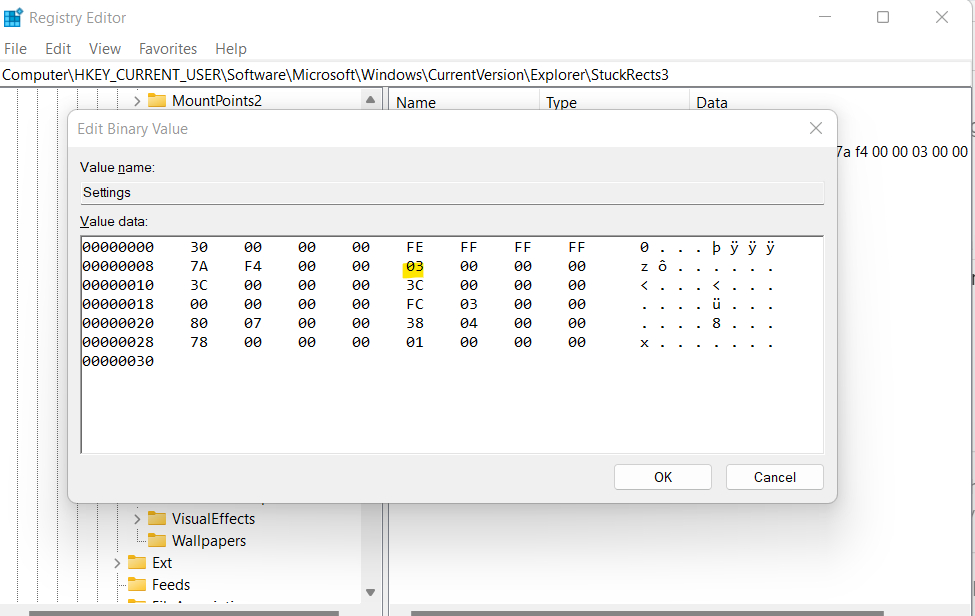

See the below examples & predict the output

const myName = ["Aniket", "Abhijit", "Awantika"];

for(var i; i<myName.length;i++)

{

setTimeout(function(){

console.log('Name: ' + myName[i] + 'at index:' + i);

},3000)

}

The output is

It prints undefined as Name & index as 3 all the three times the loop executes. Let's try to understand why this happened: When we pass a custom function inside setTimeout it creates a closure of the function bundled with the captured environment from the out functions scope. Three closures are created by the loop but each shares the same lexical environment which has the variable "i" with changing values. This is because the "i" variable is defined as var and has a global scope. The value of "i" in the passed function is determined when it is actually executed after the timeout completes (when it comes from the event loop). Since the loop has already been completed by the time these closures from the event loop are executed "i" points to 3 and there is no such index in that array as the size is 3 and the index range from 0 to 2. Hence it prints "i" as 3 and the name as "undefined" three times - one for each closure created in the loop.

You can fix this by using a wrapper function to be passed inside setTimeout API argument which will create its own closure with the actual local value of "i" and pass it to the event table instead of just the original closure which is pointing to global scoped "I". Eg see below where I have given two ways one using function keyword and one using anonymous function

Or you could simply change var to let in the for loop which will create a block-scoped variable instead of a global scope and changes will still work fine.

Related Links